ChatGPT CEO Urges Federal Regulation to Prevent Future AI Harm

As artificial intelligence matures, it’s creating lots of buzz and serious debate. The buzz is about what bots can, cannot and should not do. The debate is over whether AI is an ultimate boon or a potential apocalypse, a useful tool or a destructive beast.

Sam Altman, CEO of the company that created ChatGPT, told Congress AI could “cause significant harm to the world,” as he called for federal regulation of the rapidly evolving technology, which he says has an ultimate capability to “outthink and manipulate humans”.

“This is not science fiction,” according to Geoffrey Hinton, the godfather of AI who recently retired from Google. He says AI technology advances are accelerating rapidly through fierce competition so that smarter-than-human machines could arrive as early as five years from now. “It’s as if aliens have landed or are just about to land.”

“This is not science fiction,” according to Geoffrey Hinton, the godfather of AI who recently retired from Google. He says AI technology advances are accelerating rapidly through fierce competition so that smarter-than-human machines could arrive as early as five years from now. “It’s as if aliens have landed or are just about to land.”

“It’s as if aliens have landed or are just about to land.”

The immediate fascination with AI is its ability to respond to commands to write a term paper or compose a haiku. “We really get taken in because bots speak good English and they’re very useful, “ Hinton warns. “They can write poetry, they can answer boring letters. But they’re really aliens.”

Other researchers and engineers say existential dangers are exaggerated, even while warning of practical concerns that should be addressed such as copyright chaos, digital privacy intrusion, greater ability to defeat cyber-defenses and AI-controlled weaponry.

“Out of the actively practicing researchers in this discipline, far more are centered on current risk than existential risk,” explains Sara Hooker, director of Cohere for AI and a former Google researcher. One current risk she cited is bots perpetuating racist and sexist tropes by drawing on existing internet content without adequate filters.

“Out of the actively practicing researchers in this discipline, far more are centered on current risk than existential risk,” explains Sara Hooker, director of Cohere for AI and a former Google researcher. One current risk she cited is bots perpetuating racist and sexist tropes by drawing on existing internet content without adequate filters.

Another practical problem is accuracy. A new York attorney representing a plaintiff injured during a flight by a runaway serving cart threw himself at the mercy of the court after submitting a legal brief citing cases that didn’t exist. The attorney said he used Chat GPT for help on legal research and the bot apparently invented cases.

A practical concern is bots repeating racist and sexist tropes from the internet.

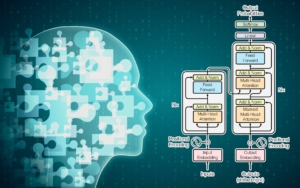

AI is advanced machine learning software based on large language models. Google CEO Sundar Pichai told 60 MinutesAI relies on algorithms scouring and “just repeating” data and images accumulated on the internet. The technology, he explained, is already widely used in social media, search engines and image-recognition software.

Fierce Competition Drives Advances

With fierce competition, AI has moved up another technological notch to what’s called generative thinking. These advanced AI programs so far can respond to prompts, engage in conversations and write computer code. The fear is they could do much more.

The race by technology companies is occurring with virtually no oversight, according to Anthony Aguirre, executive director of the Future of Life Institute, an organization founded in 2014 to study existential risks to society. The group began researching the possibility of AI destroying humanity in 2015 with a grant from Elon Musk, who invested in OpenAI that created ChatGPT, but has since backed away from the company and raised concerns.

The fear is AI programs will develop an ability to think for themselves and take over their own control. “What it will take to constrain them from going off the rails will become more and more complicated,” Aguirre says. “That is something that some science fiction has managed to capture reasonably well.” It’s why Aguirre has called for a six-month pause on AI development to allow time to install some semblance of oversight.

Altman disagrees with pausing development and instead favors allowing rapid development to continue so new tools can emerge, allowing problems to be spotted and issues addressed.

Former Google researchers aren’t as sanguine as Altman. Timnit Gebru and Margaret Mitchell co-wrote a paper with University of Washington academics Emily M. Bender and Angelina McMillan-Major arguing that large language models are just “parrots” of what exists on the internet. One of their major concerns is bots recycling racist and sexist content that flows freely on social media and internet sites, but which bots can’t fully differentiate as inaccurate or inappropriate.

Another of their concerns is the strong push by technology giants to dominate the field, “rapidly centralizing power and increasing social inequities.” The latter concern apparently led Google to dismiss Gebru and Mitchell.

On cue, Microsoft researchers working with Open AI on GPT 4 say they have detected “sparks of artificial general intelligence”. Skeptics question the existence of those sentient sparks and attribute the claim to Microsoft trying to puff up its brand. Nevertheless, Microsoft researchers claim their technology developed a spatial and visual understanding of the world based on its training text. GPT4, they said, could draw unicorns and describe how to stack random objects including eggs onto each other in such a way the eggs wouldn’t break. They also said GPT4 could solve a range of problems from mathematics to medicine without special prompts.

On cue, Microsoft researchers working with Open AI on GPT 4 say they have detected “sparks of artificial general intelligence”. Skeptics question the existence of those sentient sparks and attribute the claim to Microsoft trying to puff up its brand. Nevertheless, Microsoft researchers claim their technology developed a spatial and visual understanding of the world based on its training text. GPT4, they said, could draw unicorns and describe how to stack random objects including eggs onto each other in such a way the eggs wouldn’t break. They also said GPT4 could solve a range of problems from mathematics to medicine without special prompts.

And reinforcing the urgency, shares of Nvidia soared on news its revenues from graphics chips for gaming rose to $7 billion in the last quarter and are projected to reach $11 billion this quarter. Its CEO attributed the unexpected revenue boost to “broader use of artificial intelligence”.

““Because of their unique features,” according to a report by Georgetown University’s Center for Security and Emerging Technology, “AI chips are tens or even thousands of times faster and more efficient than CPUs for training and inference of AI algorithms.”

Whether to worry or not pivots on one’s definition of “smart”. No one questions AI programs are getting smarter. The roiling debate is over how smart is too smart for the good of mankind.

[This blog was based on an article by Gerrit De Vynck, who covers technology issues for The Washington Post.]

What Are Large Language Models

What Are Large Language Models

A large language model is a trained deep-learning model that understands and generates text in a human-like fashion. The “topbots” to date are:

Background on Sam Altman

Altman, 38, is a computer programmer turned entrepreneur. He got his first computer at age eight and attended Stanford University studying computer science for a year before dropping out.

When he was 19, Altman co-founded Loopt, a social networking mobile application. Despite raising $30 million in venture capital investments, the company never took off and was acquired by Green Dot Corporation in 2012. Altman was president of Reddit for eight days in 2015. He joined OpenAI as CEO in 2020.

Altman has been involved in angel investing in technology startups and nuclear energy companies. He cofounded Tools for Humanity that is building a biometric system to validate a person’s identity using cryptocurrency called Worldcoin. He also been active in politics and philanthropy and recognized as an entrepreneur and investor by Time,Businessweek and Forbes.

Altman’s congressional testimony

Altman recently testified before the Senate Judiciary Committee in favor of regulating the AI industry. “My worst fears, are that we the field, the technology, the industry cause significant harm to the world…If this technology goes wrong, it can go quite wrong and we want to be vocal about that.”

The government regulation he supported included licensing and testing requirements for AI companies. He also supported allowing owners of data to opt out of large language model training sets. Altman said it may be easier to monitor the direction of AI technology if there are fewer high-end competitors. Here are segments of his testimony from YouTube: https://www.youtube.com/watch?v=IB0y2jidZk.